Now, let's consider how an inner join works. For our example, we will use a tumbling window. Various types of windows are available in Kafka. Kafka calls this type of collection windowing. We'll look at the types of joins in a moment, but the first thing to note is that joins happen for data collected over a duration of time.

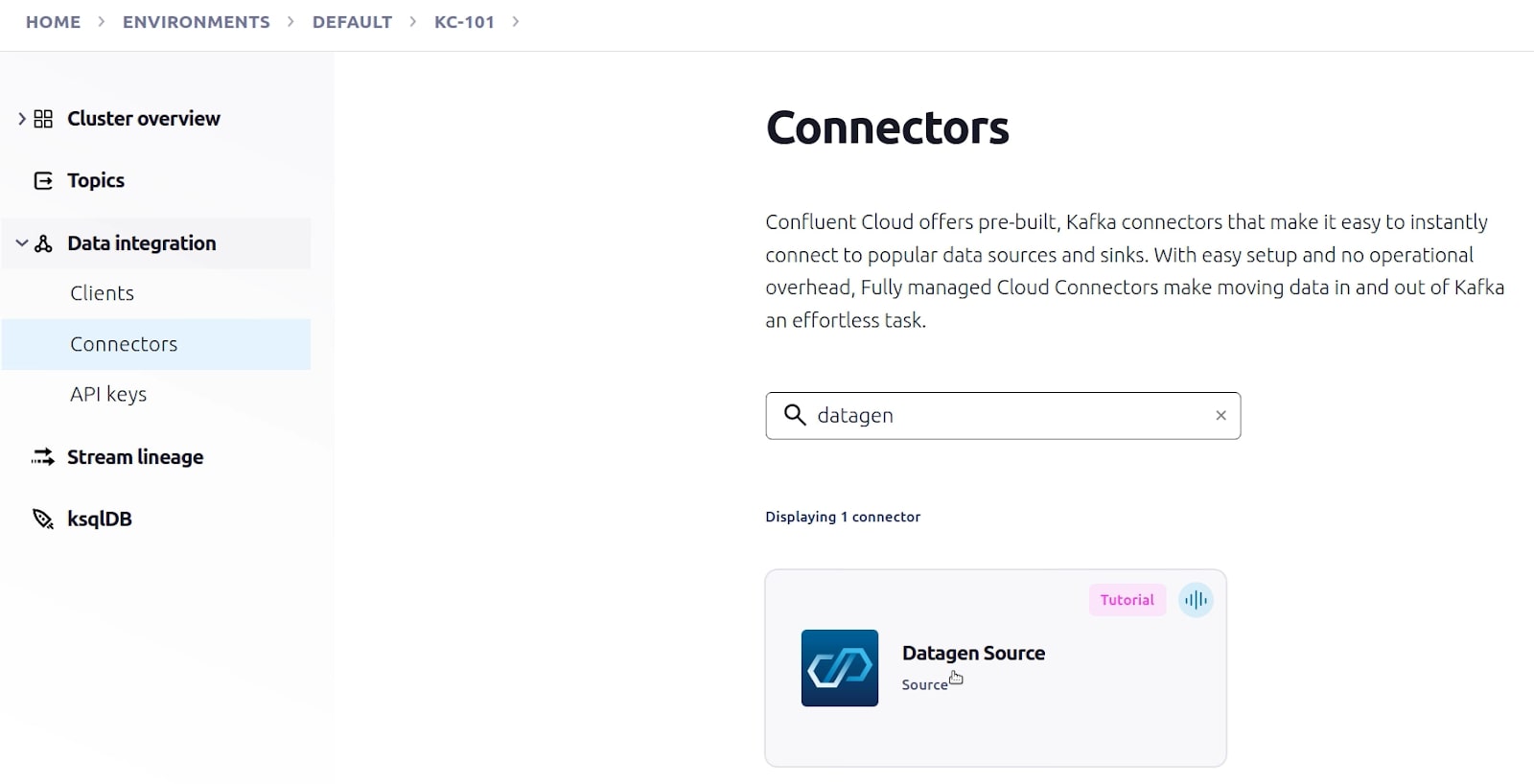

In Kafka, joins work differently because the data is always streaming. You are probably familiar with the concept of joins in a relational database, where the data is static and available in two tables. Kafka allows you to join records that arrive on two different topics. Before we start coding the architecture, let's discuss joins and windows in Kafka Streams. You can get the complete source code from the article's GitHub repository. In the next sections, we'll go through the process of building a data streaming pipeline with Kafka Streams in Quarkus. Figure 1 illustrates the data flow for the new application:įigure 1: Architecture of the data streaming pipeline. This type of application is capable of processing data in real-time, and it eliminates the need to maintain a database for unprocessed records. Our task is to build a new message system that executes data streaming operations with Kafka. If the data record doesn't arrive in the second queue within 50 seconds after arriving in the first queue, then another application processes the record in the database.Īs you might imagine, this scenario worked well before the advent of data streaming, but it does not work so well today.

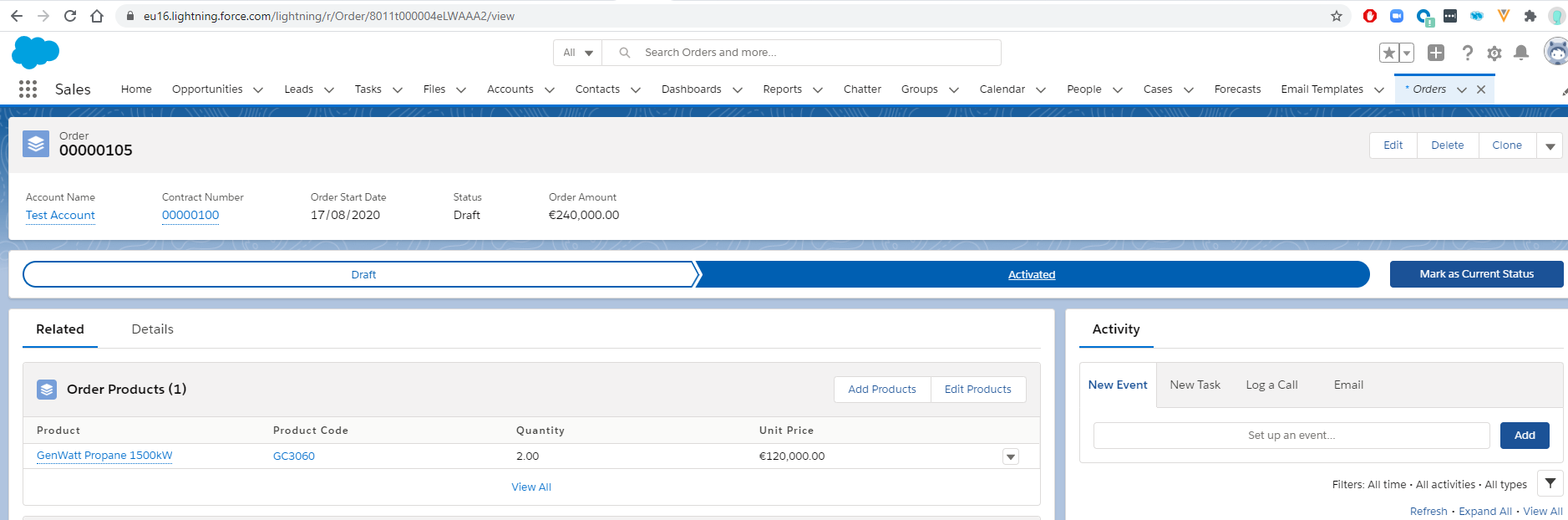

If the record is present, the application retrieves the data and processes the two data objects. It checks whether a record with the same key is present in the database. If the same data record arrives in the second queue within a few seconds, the application triggers the same logic.If it does not find a record with that unique key, the system inserts the record into the database for processing. When a data record arrives in one of the message queues, the system uses the record's unique key to determine whether the database already has an entry for that record.Each record in one queue has a corresponding record in the other queue. Data from two different systems arrives in two different messaging queues.Here's the data flow for the messaging system:

The traditional messaging systemĪs developers, we are tasked with updating a message-processing system that was originally built using a relational database and a traditional message broker. By the end of the article, you will have the architecture for a realistic data streaming pipeline in Quarkus. As we go through the example, you will learn how to apply Kafka concepts such as joins, windows, processors, state stores, punctuators, and interactive queries. In this article, we will build a Quarkus application that streams and processes data in real-time using Kafka Streams. The other systems can then follow the same cycle-i.e., filter, transform, store, or push to other systems.

Data gets generated from static sources (like databases) or real-time systems (like transactional applications), and then gets filtered, transformed, and finally stored in a database or pushed to several other systems for further processing. In real-time processing, data streams through pipelines i.e., moving from one system to another. But with the advent of new technologies, it is now possible to process data as and when it arrives. In typical data warehousing systems, data is first accumulated and then processed.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed